-

W3C XML Schema documentation generator

-

An example of how eBay uses metadata embedded in their XSD to generate HTML documentation automatically

A store for products that don’t exist yet

How do you let Apple know that you want the next release of the Mini to include a Blu Ray player and how much you’d be ready to pay for it?

What about an online store that includes some of the many product concepts designed by Apple fans such as the MacCube concept below? or a product configurator of existing products that includes features not yet available from the manufacturer but that exist as technologies or as third-party add-ons?

Seems like something identified by the VRM promoters.

Only problem: would Apple care?

links for 2008-02-21

-

A video showing how a farm survived a tough winter by issuing its own notes.

The archetypal business platform on the Web

Having both a background in the business and engineering of software, a topic of particular interest to me is the archetype or pattern of successful Web businesses. By “business platform”, I mean the software that powers a business and what I want to talk about is what the business platform typically does at highly successful Web businesses.

Archetypes or patterns are not synonymous with recipes. Knowing the typical structure of a symphony does not make you a composer, or in the present case, knowing the archetypal business platform does not make you a billionaire. They do nonetheless help you in thinking and exchanging ideas about particular instances.

Ok, I won’t make you salivate longer. Here’s my attempt at formulating the archetypal business platform on the Web:

“Web companies make it easy for people to post information on the Web in a format that makes it easy for other interested people to discover, search and browse what is relevant to them, but more importantly in a way that they retain control over who gets to see the information and how. All that of course, 24/7, real-time and globally.”.

Let’s take a few examples:

- YouTube made it easy for people to post videos on the Web in a format that makes it easy for other interested people to discover, search and browse what is relevant to them, but more importantly in a way that they can add commercials to the videos being watched.

- Facebook made it easy for people to post status, pictures, messages, review, etc. in a format that makes it easy for other people to discover, search and browse those that are relevant to them (their friends’), but more importantly in a way that they can track who is interested in what and add relevant “social ads” next to the information they consume.

- Amazon started as an online bookstore, but is quickly evolving into making it easy for book writers, audio book producers and possibly in the near future vendors of other types of digital or potentially digital goods to publish their work on the Web, in a way that makes it easy for other interested people to discover, search and browse (Kindle), and buy, but more importantly in a way that they can take a commission on the resulting purchase transaction.

- Apple started as a hardware vendor, but it has quickly become a company involved in making easy and safe for music and movies producers (soon game producers) to market their digital goods online in a way that other people can easily listen to or watch (iPod, AppleTV, etc.), but more importantly in a way that they can take a commission on the resulting purchase transaction (iTunes).

- etc.

Ok, I am assuming that by now I’ve convinced you, or that I got the benefit of the doubt, or at least that I formulated correctly something that has long been obvious for you (and that you can now share easily). If not, I’m happy to learn about your viewpoint and discuss.

What is now interesting to think about, is how we can take this simple tool in thinking about the future of business on the Web. I think we can make a few easy predictions:

- Autonomous publishing software/hardware that publish information about you on their own are quite exciting, as enablers of new businesses. Example includes your location as detected by your cell phone provider, temperature/humidity data loggers (ex. temperature, humidity) used to complement existing weather sytems, 2-way navigation systems used for real-time traffic data sharing, camera that directly push pictures on the Web, etc. I think we will see more of these autonomous data publishers.

- Publishing tools that will make it easier for people to write content in a format that can be indexed, searched, browsed by others. Here, we may finally start to move away from the online form and start to see semantic extraction technologies or semantic content editors that add unambiguous meaning (microformats in the case of text) to your content as you create it.

- Consumption interfaces that will make it easier and entertaining for people to express what they are looking for in a way that a software can understand and find relevant items from an ever growing and diverse collection of digital goods.

- Consumption devices that will provide the best way possible to experience a content in a given environment. That would include screen walls for digital painting, 3D printers, etc.

(Please use the comment form to submit your ideas)

Of course, last, we will see more and more of companies trying to own the whole chain on a particular type of digital good or a particular community of people, via acquisitions or partnerships. And to mirror this, more and more communities that will try to prevent companies from doing so too much and/or for too long.

Again, this archetype is not a recipe for success. In my view, the most significant pattern of success but also the most difficult to craft is community. Another one is legal innovation. More on both, hopefully, later.

Kicking the tires with OpenCalais

[I deleted this post by mistake – this is a re-post]

OpenCalais is a software that takes in a piece of textual content in plain text or HTML format, extracts entities from it and generates an RDF graph in XML of them.

OpenCalais was brought to my attention by a post by Bob Jonkam on the microformats discuss list. I was intrigued and decided to take a look.

After the OpenCalais team helped me quickly resolve the HTTP 403 issue, I decide to give it a try with a text from the relatively recent heated “calendar (and other) items aren’t always tidy” discussion on uf-discuss , I will refer to as “football example”:

Bobby and Billy are on the same football team and on Sunday they’re playing against the Falcons, whose coach is Ron Smith. Ron Smith is Bobby and Billy’s father. The brothers are also the star quarterback and star fullback at Pittsfield High.”

The result in RDF XML can be found here. Below are in plain english the significant things that OpenCalais successfully identified:

- Billy is a Person

- Billy is mentioned at 2 places in the text (offsets 92 and 229)

- Ron Smith is a Person

- Ron Smith is mentioned at 2 places in the text (offsets 194 and 206)

Interestingly, Bobby was not identified as a Person, and obviously there is a whole lot of entities and relationships that haven’t been identified.

Obviously, the football example is probably a edge case for OpenCalais. With Reuters as a sponsor, this technology seems much more geared towards business news analysis. This is even more obvious when you look at their semantic metadata, where you find things like Person, PersonProfessional, PersonPolitical, Bankrupcy, Alliance, Acquisition, etc. But the roadmap mentions that later this year, third-party developers will be able to extend the extraction capabilities of OpenCalais.

This is an interesting open initiative nonetheless and a smart move from Reuters towards being a platform. Its limitations with an edge case also shows to me how the semantic Web, just like the software market, won’t probably be “owned” by one player, but that a multitude of players with a variety of breadth and depth because there are as many representations of the world as there are cultures, communities and people, and they evolve all the time.

[Note: as a French man, when I saw the name of this service, I immediately thought of the city of Calais, the town in northern France where Le Tunnel sous La Manche (”chunnel”) starts/ends. I still can’t figure why they picked that name and can’t wait to know!]

Trying out Yahoo! Shortcuts

Yahoo! Shortcuts is a new service launched by Yahoo! on or around December 13th 2007. Yahoo! Shortcuts make it easy for bloggers to link the content in their blog posts to Yahoo! resources, such as maps, products for sale or stock quotes.

The interesting part of Yahoo! Shortcuts is in the WordPress plugin provided to insert these links. The plugin detects things as you type your post, and allows you to link them to Yahoo! resources. In this page, all these dotted underline links are Yahoo Shortcuts you can try out by passing your mouse pointer over. Examples include addresses (1600 Pennsylvania Avenue NW Washington, DC 20500), products (Garmin Nuvi 660), and companies (Apple).

Whenever a “Shortcut” is found, the Y! Powered Shortcuts widget is updated with the number of Shortcuts found. Also, the text of the shortcut is markup like: <span id="lw_1202511969_3" class="yshortcuts">Apple. The last digit of the id seems to be the number of the shortcut, and the number “1202511969” seems to be an id that is unique to the post. It’s worth noting the cryptic nature of these tags: there seems to be no way to tell that a particular shortcut is an address or product or company name.

I also noticed that it seems that when an entity like Apple is mentioned several times, it is only detected once. I guess that this is to avoid cluttering the post with tons of shortcuts.

The next step consists in reviewing the post. For each Shortcut detected, it is possible to remove it, convert it to a badge or keep it as a link (default). A badge means that the Yahoo! content will be embedded in the page itself. A link means that the Yahoo! content will appear when the link is hovered.

I have to say I’m quite impressed with the annotation technology. “999 Mission, San Francisco” is not detected, but “999 Mission Street, San Francisco” is. “123 Mission St, San Francisco” is as well. There seems to be support for detection in other languages as well: 150 rue saint-jacques, paris was detected correctly for instance. I imagine that this technology is the same one I noticed Yahoo uses in Yahoo! Mail to detect emails, phone numbers, addresses, events, etc. The FAQ also mentions that there are ways to improve the chances of the service detecting some objects, which gives credit to my “plain old english formats” theory (more on this hopefully in a coming post).

One current limitation of this technology is the detection of the relations between individual pieces of data. For instance, in Yahoo! Mail, if I have a phone number next to a name next to an email, the email and the phones will be detected as individual pieces of data, and I will be given the possibility to create a new contact for the phone number or to add it to an existing contact. This would not be an issue with a microformatted hCard, but writing an hCard today requires more skills and time than writing plain english.

On the usability side, from a post writer standpoint, I think the whole thing is pretty well designed although it would be nice to have the post reviewing step integrated in WordPress editor (TinyMCE).

The main issue I see is from a reader standpoint: they have no choice as to what to do with the detected content. The only thing you can do with an address is to look it up on Yahoo! map or search related Yahoo! news. For a company, the only thing you can do it to look its stock performance on Yahoo! Finance or search for it, etc. But of course, that is the whole point for Yahoo!: drives more traffic towards Yahoo! properties. I also don’t know if the licensing terms allow the style to be changed (technically, it seems it’s possible since all the style-related files are part of the plugin), but I think that would be a necessity as these Yahoo badges may not satisfy everyone’s taste and may repel some users and change their perception of the quality of the blog.

Microsoft Offers to Buy Yahoo: Semantic analysis by OpenCalais

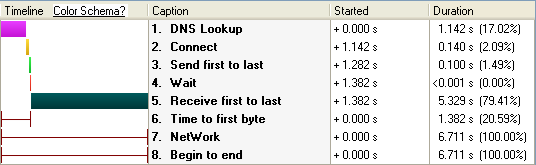

My first try of OpenCalais semantic extractor made me realize that OpenCalais is currently better suited for business/financial news analysis. So, I wanted to give it a try with such a piece of news, and what better and more relevant example than the recent announcement by Microsoft of their offer to buy Yahoo. I picked the news story as reported by Bloomberg.com and submitted it to the OpenCalais semantic extractor Web service released a couple days ago.

Here is the (huge) RDF XML document returned from this piece of news. According to my HTTP Analyzer, from begin to end, the request/response took 6.711 seconds, the lion’s share of which was spent in transport (~80%). The actual Web server processing time was reported as being almost insignificant:

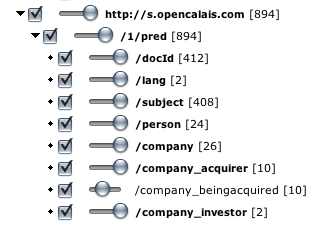

In terms of resources identified, OpenCalais did a very impressive job at identifying all the persons, companies, positions of persons at companies, relationship between companies, etc. Here is the list of entities identified as viewed in MIT SMILE Welkin application and the Circle RDF graph visualization:

Here is the first issue though. This looks to me like… a haystack, and finding out the needle, i.e. what is actually relevant, would seem to be a big challenge. This is why I think it would be valuable for a semantic extractor like OpenCalais to weigh RDF statements, for instance according to their position in the text. Typically for instance, the title and first few paragraph would contain the most relevant information. I believe it would be great (maybe I missed it though) for the RDF document to return the most relevant RDF statement first.

Here are the other issues I noticed with regards to the facts extracted:

First, the name of the company who offered to buy Yahoo is “Microsoft offers”:

<rdf:Description

rdf:about="http://d.opencalais.com/genericHasher-1/2fafdca3-9c94-3cec-a757-ab4b5d4ace74">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/r/Acquisition"/>

<!--Microsoft Offers-->

<c:company_acquirer

rdf:resource="http://d.opencalais.com/comphash-1/a58f842b-c683-36e8-bc8f-458fd86ca664"/>

<!--Yahoo-->

<c:company_beingacquired

rdf:resource="http://d.opencalais.com/comphash-1/a7244973-6b02-36b0-8a6c-18cf4b59ee4b"/>

<c:status>planned</c:status>

</rdf:Description>

<rdf:Description

rdf:about="http://d.opencalais.com/comphash-1/a58f842b-c683-36e8-bc8f-458fd86ca664">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/e/Company"/>

<c:name>Microsoft Offers</c:name>

</rdf:Description>

Second, Jerry Yang is reported as Co-founder of Facebook:

<rdf:Description

rdf:about="http://d.opencalais.com/genericHasher-1/d53dfdcd-ed76-3722-82bc-8b55cf65cc2f">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/r/PersonProfessional"/>

<!--Jerry Yang-->

<c:person

rdf:resource="http://d.opencalais.com/pershash-1/f9e2dce6-da09-3734-a86e-e4cd8e7b6df8"/>

<c:position>Co-founder </c:position>

<!--Facebook Inc.-->

<c:company

rdf:resource="http://d.opencalais.com/comphash-1/6e117dae-5f83-3641-a394-b626053412cb"/>

</rdf:Description>

Last, it seems that Google is reported as an investor in Microsoft…

<rdf:Description

rdf:about="http://d.opencalais.com/genericHasher-1/d63adca9-71c2-3268-a47a-86aa2b35408f">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/r/CompanyInvestment"/>

<!--Microsoft-->

<c:company

rdf:resource="http://d.opencalais.com/comphash-1/49bf454b-3fed-3244-94fc-b3d5115f7df4"/>

<!--Google Inc.-->

<c:company_investor

rdf:resource="http://d.opencalais.com/comphash-1/6a5c8712-9c0f-39bd-8655-9a100c09ecba"/>

<c:status>known</c:status>

</rdf:Description>

<rdf:Description

rdf:about="http://d.opencalais.com/dochash-1/990c3a0a-89f3-31cc-901c-d563fdcc9aa6/Instance/125">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/sys/InstanceInfo"/>

<c:docId

rdf:resource="http://d.opencalais.com/dochash-1/990c3a0a-89f3-31cc-901c-d563fdcc9aa6"/>

<c:subject

rdf:resource="http://d.opencalais.com/genericHasher-1/d63adca9-71c2-3268-a47a-86aa2b35408f"/>

<!--CompanyInvestment: company: Microsoft; company_investor: Google Inc.; status: known; -->

<c:detection>[in the past 12 months after years of ]investments in Microsoft's own business[

failed to help the company gain share. ]</c:detection>

<c:offset>3852</c:offset>

<c:length>39</c:length>

</rdf:Description>

Obviously the service has access to little to no context besides the document itself.

My conclusion is that OpenCalais would benefit from a statement weighting computation engine that would take into account both known facts, document structure and level of confidence in the analysis to return the developer with the most relevant information. I wonder if OpenCalais sees this on their roadmap, if they have it already (and missed it, but I don’t think so), or if they don’t consider this as part of the functionality they provide and expect the application developer to do the work.

Another question is why the software is offered as a service when most of the time is spent on transport and when there seems to be little context used outside of the submitted document.

Alexander Payne’s 14e Arondissement

This short from the movie Paris, je t’aime is the one that moved me the most. I don’t know why it is on YouTube, but I’m so happy I found it. Enjoy!

The meaning of vcard’s “fn”

Martin McEvoy recently resurrected a thread on the replacement of “fn” by “title” in the hAudio microformat. The main point is that “fn” (formatted name) is a bit cumbersome for a song’s name/title. This offered me the opportunity to give my interpretation of the meaning of “formatted name”, which I will summarize here.

A formatted name is a locale-specific (typically of the locale the name is from) serialization of a structured representation of a person’ name. It is useful for display and print, for instance on the label of an envelope, where conformance to local name ordering practices is desired for politeness reasons.

Now some explanation of why formatted names are important for people’s names.

For those who don’t know, there are different name ordering conventions in different parts of the world. Just as an example, given name first, family name second is common in Western countries, whereas family name first, given name second is common in Eastern countries.

So, computer people who want to store names of persons for different places in the world have to deal with the following problem: they want to be able to distinguish family names from given names and other names (middle, mother’s, etc.) since it helps for searching, for identification and for avoiding duplicate entries, but they don’t want to be impolite either and send a letter with a name formatting that does not comply with the locale of the person.

One solution to this problem could be to identify all the different types of name ordering conventions, for instance, by locale and locale region, then code these rules in some programming language, then keep the information about the locale of the person, or infer it from the country they were born in, or the place they live, or something else, and then compute the formatted name from the database or structured or tokenized representation.

That is obviously a lot of work, and also not completely fail-proof. For instance, a Japanese person living in the U.S. might still want their name to be printed on letters with the last name first. If you add honorific titles, prefixes, suffixes, abbreviated forms, etc. to the problem mix, it is even more work. Usually at this point, what the computer people do is go back to the problem they were addressing (usually not an international name storage problem, but something else like a customer data storage problem for a U.S. bank or an electronic business card problem) and realize that if they spend so much time on each issue (“Why are we doing this again?”), and that no much will come out of their work if they continue on this path (at least no fast enough for the next quarter). This is the exact situation that myself and my IFX colleagues faced a couple years ago, and I’m hypothesizing that this is the same problem that the vCard people faced.

The only easy solution is to store the name of the person in a structured format, but keep a copy of the preferred formatting in a separate field. This is what we did at IFX, and this is what I’m guessing the vCard people did.

All this to say that the meaning of “formatted name” is to me very specific to those names for which there is value in maintaining two representations, one structured and one serialized, because reconstructing one from the other is difficult. To go back to the original thread raised by Martin, and given the above, I don’t think that “fn” should be used for a song’s name.

Punctuation as markup

Like many, I spent most of my days in markup. Sometimes to the point that I forget what it was invented for, to address what problem. During these times of doubt and confusion, I like to go back in time and read the works of the pioneers.

Yesterday night, I read this great article Markup Systems and the Future of Scholarly Text Processing, which dates back from before XML, HTML, SGML, or GML even!

There is a section on the different kinds of markup, in particular punctuational markup and descriptive markup. After reading this, it occurred to me that the following three representations are strictly equivalent (markup is highlighted in bold):

- Plain text markup using the period “markup” to signify the end a sentence that is a statement (see. this section of HyperGrammar for a grammar refresher):

The teacher asked who was chewing gum. - XML markup that specifies that a piece of text is a sentence and a statement (notice no end punctuation here):

<sentence><statement>The teacher asked who was chewing gum</statement></sentence> - Plain old semantic HTML that specifies that a piece of text is a sentence and a statement (notice no end punctuation here):

<span class="sentence statement">The teacher asked who was chewing gum</span>

In the last case, you can use CSS code to add a period at the end of each sentence that is a statement:

.sentence.statement:after {

content: '.'

}

This means also that if we are being strict, combining punctuation with HTML or XML markup, when descriptive markup and CSS styling suffice, is a bad practice since it is semantically redundant, or in other words, one of the two is useless.

There are plenty other examples to explore: for instance, quotes (“) as markup that is an alternative to the <q></q> or <blockquote></blockquote> HTML markup. This may be pushed to the extreme that each space in plain text is viewed as markup to distinguishes words from one another.

This is probably an epiphany just for me, but I thought I’d post it anyway!