My first try of OpenCalais semantic extractor made me realize that OpenCalais is currently better suited for business/financial news analysis. So, I wanted to give it a try with such a piece of news, and what better and more relevant example than the recent announcement by Microsoft of their offer to buy Yahoo. I picked the news story as reported by Bloomberg.com and submitted it to the OpenCalais semantic extractor Web service released a couple days ago.

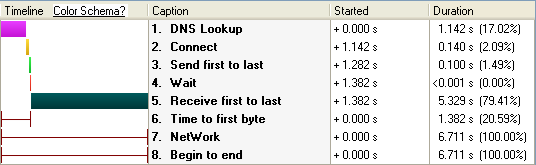

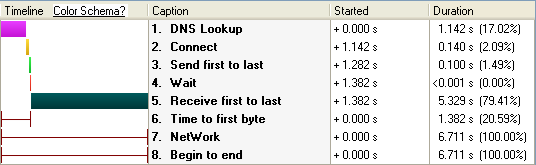

Here is the (huge) RDF XML document returned from this piece of news. According to my HTTP Analyzer, from begin to end, the request/response took 6.711 seconds, the lion’s share of which was spent in transport (~80%). The actual Web server processing time was reported as being almost insignificant:

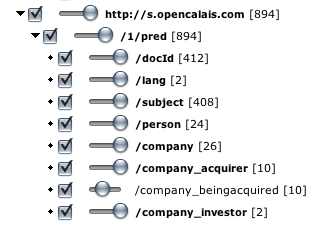

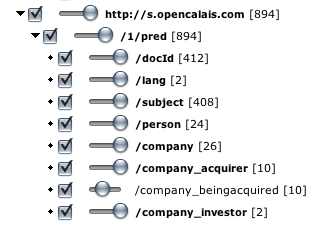

In terms of resources identified, OpenCalais did a very impressive job at identifying all the persons, companies, positions of persons at companies, relationship between companies, etc. Here is the list of entities identified as viewed in MIT SMILE Welkin application and the Circle RDF graph visualization:

Here is the first issue though. This looks to me like… a haystack, and finding out the needle, i.e. what is actually relevant, would seem to be a big challenge. This is why I think it would be valuable for a semantic extractor like OpenCalais to weigh RDF statements, for instance according to their position in the text. Typically for instance, the title and first few paragraph would contain the most relevant information. I believe it would be great (maybe I missed it though) for the RDF document to return the most relevant RDF statement first.

Here are the other issues I noticed with regards to the facts extracted:

First, the name of the company who offered to buy Yahoo is “Microsoft offers”:

<rdf:Description

rdf:about="http://d.opencalais.com/genericHasher-1/2fafdca3-9c94-3cec-a757-ab4b5d4ace74">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/r/Acquisition"/>

<!--Microsoft Offers-->

<c:company_acquirer

rdf:resource="http://d.opencalais.com/comphash-1/a58f842b-c683-36e8-bc8f-458fd86ca664"/>

<!--Yahoo-->

<c:company_beingacquired

rdf:resource="http://d.opencalais.com/comphash-1/a7244973-6b02-36b0-8a6c-18cf4b59ee4b"/>

<c:status>planned</c:status>

</rdf:Description>

<rdf:Description

rdf:about="http://d.opencalais.com/comphash-1/a58f842b-c683-36e8-bc8f-458fd86ca664">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/e/Company"/>

<c:name>Microsoft Offers</c:name>

</rdf:Description>

Second, Jerry Yang is reported as Co-founder of Facebook:

<rdf:Description

rdf:about="http://d.opencalais.com/genericHasher-1/d53dfdcd-ed76-3722-82bc-8b55cf65cc2f">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/r/PersonProfessional"/>

<!--Jerry Yang-->

<c:person

rdf:resource="http://d.opencalais.com/pershash-1/f9e2dce6-da09-3734-a86e-e4cd8e7b6df8"/>

<c:position>Co-founder </c:position>

<!--Facebook Inc.-->

<c:company

rdf:resource="http://d.opencalais.com/comphash-1/6e117dae-5f83-3641-a394-b626053412cb"/>

</rdf:Description>

Last, it seems that Google is reported as an investor in Microsoft…

<rdf:Description

rdf:about="http://d.opencalais.com/genericHasher-1/d63adca9-71c2-3268-a47a-86aa2b35408f">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/em/r/CompanyInvestment"/>

<!--Microsoft-->

<c:company

rdf:resource="http://d.opencalais.com/comphash-1/49bf454b-3fed-3244-94fc-b3d5115f7df4"/>

<!--Google Inc.-->

<c:company_investor

rdf:resource="http://d.opencalais.com/comphash-1/6a5c8712-9c0f-39bd-8655-9a100c09ecba"/>

<c:status>known</c:status>

</rdf:Description>

<rdf:Description

rdf:about="http://d.opencalais.com/dochash-1/990c3a0a-89f3-31cc-901c-d563fdcc9aa6/Instance/125">

<rdf:type rdf:resource="http://s.opencalais.com/1/type/sys/InstanceInfo"/>

<c:docId

rdf:resource="http://d.opencalais.com/dochash-1/990c3a0a-89f3-31cc-901c-d563fdcc9aa6"/>

<c:subject

rdf:resource="http://d.opencalais.com/genericHasher-1/d63adca9-71c2-3268-a47a-86aa2b35408f"/>

<!--CompanyInvestment: company: Microsoft; company_investor: Google Inc.; status: known; -->

<c:detection>[in the past 12 months after years of ]investments in Microsoft's own business[

failed to help the company gain share. ]</c:detection>

<c:offset>3852</c:offset>

<c:length>39</c:length>

</rdf:Description>

Obviously the service has access to little to no context besides the document itself.

My conclusion is that OpenCalais would benefit from a statement weighting computation engine that would take into account both known facts, document structure and level of confidence in the analysis to return the developer with the most relevant information. I wonder if OpenCalais sees this on their roadmap, if they have it already (and missed it, but I don’t think so), or if they don’t consider this as part of the functionality they provide and expect the application developer to do the work.

Another question is why the software is offered as a service when most of the time is spent on transport and when there seems to be little context used outside of the submitted document.